After coming back from WM2026 in Phoenix, I found myself reflecting on something slightly unexpected from the trip.

John and I had a good week collaborating with Lucideon and presenting our Gamma-Crete™ work, which led to some useful discussions around shielding performance in radiation transport and the potential for more efficient packaging approaches.

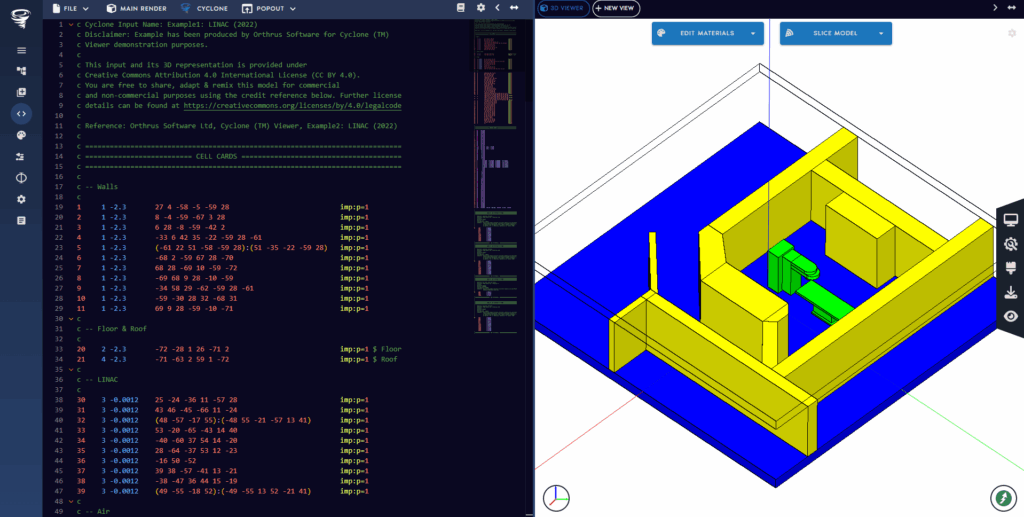

Alongside that, we also used the time while we were away to continue developing Cyclone Sage, the AI assistant we are building for Cyclone at Orthrus Software. So by the time we were heading home, I was already thinking quite hard about AI systems, how people respond to them, and what sort of trust they should be asking of them.

We used a Waymo while we were in Phoenix. It was John’s first time in one, so he got the full “wow” factor straight from the airport. That reaction is easy to understand. Sitting in a car with no driver still feels rather strange for the first few minutes. The steering wheel moves, the vehicle slows for traffic, people cross the road ahead, and some part of you still expects to see someone in the front taking control. But what struck me was how quickly that feeling wore off. The ride became normal far quicker than you might expect.

That was probably why my mind drifted back to an earlier Waymo trip in Austin during the ANS NCSD conference. What stayed with me from both experiences was the system behaviour rather than the novelty. The vehicles were cautious in a very deliberate way. If it wasn't sure whether someone was about to cross the road, it would come to a full stop rather than force the judgement. You quickly realise that the system is operating within a different logic to a confident human driver. From a safety point of view, that caution makes complete sense, even if it does not always feel natural in the way a human driving often does.

There is obviously lots of rigorous testing, measurement and assurance thats needed and required to get to this point, but it does highlight that this alone is not what people ultimately respond to. Trust is shaped just as much by how a system behaves in context as by how it is validated on paper.

What was just as interesting was the way people around them reacted. In Austin, at our hotel, the concierge tried to shoo one away as it pulled in, despite the fact that it had positioned itself perfectly to pick us up. That moment stuck with me because it showed that the question is not only whether the vehicle can do the job, but how people feel about it doing the job. A system may be operating properly, but that does not always mean people welcome it. In fact, some of the locals did not seem to like them at all. So the trust issue is not only technical, it is fundamentally social as well.

That became even clearer at the airport pick-up and drop-off in Phoenix. It was very busy, with human drivers everywhere, several Waymos moving through the same space, and the usual airport chaos where nobody hangs around and everyone is in their own world. John and I both noticed that the Waymos still behaved in a very algorithmic way. You could see it was making predictions about what other cars were doing, but its predictions were not human in the way a taxi driver’s instincts are. There were moments when I found it was easier read the behaviour of other drivers than the system found it.

At the same time, it handled the situation very well. It had clear 360-degree spatial awareness, and you could see that it knew exactly where it could and couldn't fit. So although its judgement did not feel human, and although its behaviour was still recognisably algorithmic, it was still very effective. That, to me, is much more interesting than simply saying the technology is impressive. Comparing both trips its also clearly improving, but it is improving in its own way rather than by becoming a human driver.

This highlights an important point, human beings are far more complex than these systems. A good taxi driver can read tone, body language, local driving habits and all sorts of context while simulatiously having a conversation with a passenger. In many situations, a good human driver is still much better at judging what is really going on. But human beings can also be distractible, inconsistent, tired, impatient, and sometimes wrong, which is when accidents can happen. The more useful design question is what kind of trust an autonomous system should earn, and on what basis.

In Waymo’s case, trust works a little different from many other AI systems because the vehicle is not just advising you. It is acting in the world on your behalf, and everyone around it has to respond to that behaviour in real time. What people react to is not the underlying model or training data, but how the car behaves. Does it feel safe, predictable and cautious? In other words, the challenge is not just making the system capable, but making it trustworthy.

That is what brought me back to Cyclone Sage. Waymo and Sage clearly do very different things. One is an autonomous system operating in the physical world, while the other is an AI assistant intended to support engineers working with MCNP. The domains are very different, and in some ways the challenge for Sage is even less tidy because an MCNP input is highly complex and open-ended. Its not a bounded control problem involving simple inputs i.e. steering, braking, acceleration and indicators.

Despite the obvious differences, I have found myself thinking about a related design issue, which is how you build a system for humans to use and trust without encouraging the wrong kind of trust. In the radiation shielding and criticality world, trust matters enormously because the consequences of getting things wrong are large. That does not mean Sage is like an autonomous vehicle, but it does mean the mindset around safety, visibility and responsibility has to be taken seriously.

That has shaped how we are developing Cyclone Sage. The aim is not to hide complexity or replace engineering judgement with something that sounds fluent. It is to support the workflow in a way that gives engineers more time to make the right decisions. MCNP model building and QA can be slow and cognitively heavy. Input decks are text-based and fragile, and small errors in geometry, materials, sources or physics settings can have significant consequences. In practice, engineers often work from previous inputs, inherit assumptions, and spend a lot of effort in checking.

Cyclone Sage is currently been designed with that balance in mind, keeping human oversight firmly in view. We have not hidden the syntax from the user, and we are not treating the system as a black box. The design is built around guided authoring, visible outputs, constrained generation and traceability, with the user remaining firmly in control rather than the system quietly taking over.

That is why I do not see AI as a means of removing the engineer from the process. Instead, it creates space to explore broader design options, reduces set-up burden, and allows more focus on the judgements that matter. Used properly, this leads to better decisions, more efficient workflows, lower cost, and safer outcomes. However, this depends on establishing trust in the system from the outset.

So that is what riding in a Waymo brought back to me after WM2026. What becomes clear is that a shared design challenge is emerging across very different fields. As systems become more capable, the important question shifts away from whether they can do something impressive. It becomes about how they fit around human judgement, how they behave when the world gets messy, and what sort of trust they are really asking people to place in them.

For me, that is a far more useful way to think about AI in engineering. The goal should not be blind faith in capable systems. It should be systems that earn trust in the right way, while leaving responsibility, visibility and judgement exactly where they should be.

If you have thoughts on this or would like to continue the conversation, feel free to get in touch.

You can email us at nuclear@cerberusnuclear.com with the subject line “Trust and AI.”

Thanks for reading.